One of the more pragmatic ways to jump on the current AI hype and get some value out of it is to use semantic search.

Semantic search in itself is a very simple concept: you have a bunch of documents and you want to find the most similar ones to a given query.

While the technology behind that is quite complex and very mathematical, it is relatively easy to use it. There are many libraries and development toolkits that provide the functionality already out of the box. You just need to feed them with your data and they will do the rest for you with just a few lines of code.

If you first experiment with semantic search, you will probably be shocked by how good the results actually are on a first glance. But then, when you dig deeper, you will find that the results are not quite as good as hoped. Sometimes you will get a lot of documents that are very similar to your query, but none of them really answers your question. And sometimes you simply know that a very specific document has the exact answer to your question, but it will absolutely not show up in the search results and several other documents that are somewhat related but not as accurate are shown instead.

While setting up a semantic search on our internal Thinktecture knowledge base, we ran exactly into this problem: We have a lot of documents that are very similar to each other, and we have a lot of documents that are relatively similar to the query, but still the searches were quite “fuzzy” and not as accurate as we hoped for.

To understand what the underlying problem is, we first need to understand how semantic search works. And then, when we realize what the actual issue is, we can try to find a solution for it.

Semantic Search

There are basically only four steps involved in semantic search.

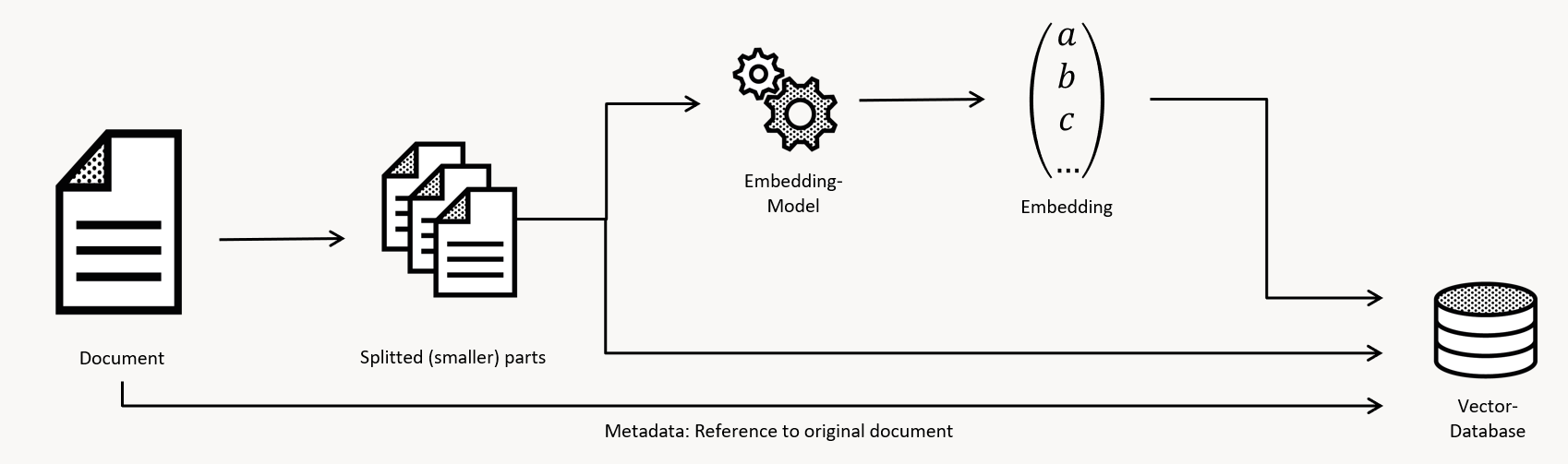

Step 1: Preparation

You take your documents, and your put them through a bit of tooling to prepare the data. For the most cases that involves splitting large documents up into several smaller chunks, as too large pieces of content tend to cover too many topics and this makes it harder to determine the most relevant piece of information in large documents.

Step 2: Indexing

The smaller chunks of content are transformed into “embeddings”. To do this, the content is passed to an AI model that is specifically trained to create embeddings. The model takes the input, and it will find a meaning in it. Since a computer only works on numbers, the meaning is represented by several numbers, like an array of positions on several axis, or dimensions. This is called a vector. I try to imagine this as a bunch of abstract measurements. On each axis an abstract concept about the input is encoded and a value assigned to it. The abstract concepts would be something like “How wet is the content? How business is the content? How animal is the content? How color is the content? How question is the content?” and so on. Getting all these measures will position this document somehwere “embedded in the AI’s brain”. And that AI’s brain is a multidimensional space. Depending on the model you have, there are hundreds if not several thousands of different dimensions. The result of this step is a “coordinate” of that chunk of data “within the embedding model’s brain”, which is called a vector and also an embedding.

Step 3: Storage

The embedding is stored in a database, together with the ID and also often the complete content of the document it belongs to. In general, a simple key-value store would be sufficient, but to search for a document you would have to look at all the keys for each search operation, and this is not very efficient. For this reason there are specialized databases that are optimized for this kind of search. They are called vector databases, or vector stores. Vector databases build indices based on the embedding values, and they also implement algorithms that can search for similar vectors in the index very efficiently. Depending on the type of database you can also store additional data (like the full chunk of the content) and also additional metadata (like the title of the document, the author, the date of creation, and so on).

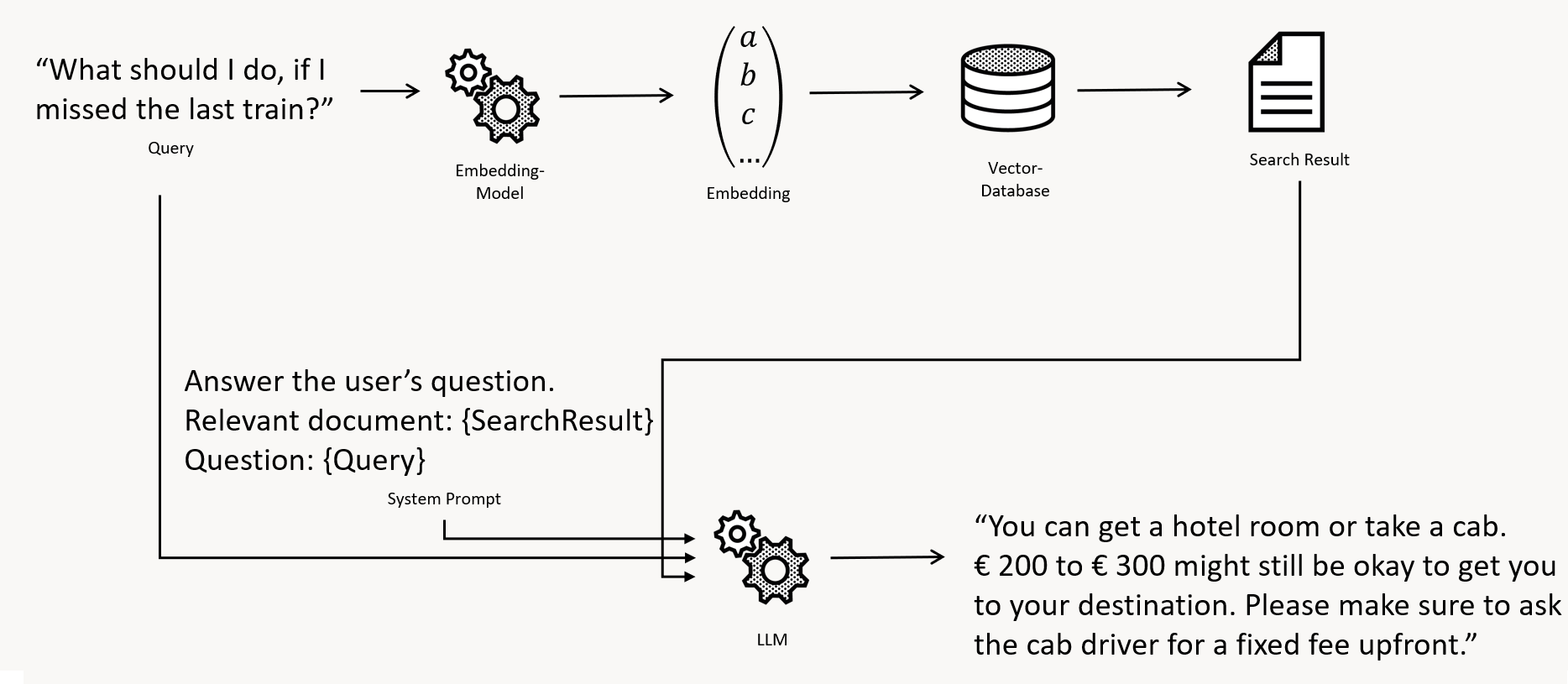

Step 4: Search

The query is passed to the same AI model that was used to create the embeddings. The result of this is a vector that represents the meaning of the query. It is very important to use the exact same model, as different AI models have different “brains” with different dimensions, and the coordinate of a document in one embedding model will be totally different than the one in another model. This vector is then passed to the vector database, and the database will return the most similar vectors. The similarity is measured by the abstract semantic distance between the embeddings of the query and the document. Sort of like “Where in the multidimensional AI’s brain is this document, and where is the question, and how far are they away?”

Found! Now what?

Technically, that’s it. You can now search for documents based on their meaning.

In a lot of cases the full text of the found document and the question of the user will be passed to a Large Language Model (LLM) like GPT to formulate a full response that is then returned to the user. This is called the RAG pattern (Retrieval Augmented Generation) but you can also simply display the list of found documents ranked by their similarity (or distance), and have the user find the real answer in the document.

The problem with embeddings

The main problem with that approach is, like pretty much everything in the field of AI, the quality of the input data.

The AI embedding model that is used to create the vectors is trained on a very large corpus of data. The more data, the better. The more diverse the data, the better. They are trained on Wikipedia, the worlds history, animals, plants, seas, stars, cities, maps and so on.

And now, please be honest to yourself, how much data do you have. And how diverse is your data really?

In most businesses, your documents have to do with your business. Not with astronomy, not with animals or plants. They all have to with your buiness problems in your field of expertise. Probably all written in a very formal style. By experts on their field. And to not confuse a reader, most authors will try and reduce their vocabulary to your domain-specific language and be very specific.

So, naturally, when you put your documents in the huge multidimensional brain of an AI embeddings model, they, will probably all be very close to each other. And when you search for a document, you will get a lot of documents that are very similar to the query, but not necessarily the one you are looking for.

To make things worse, a query is usually a question. Your documents are usually a collection of facts, statements, numbers, and so on. Questions and your documents are not very similar. So in general, while all documents are very near to each other, a query, by nature, is usually further away from your bulk of documents. This means, that while you will get a lot of documents that are similar to your query, you might simply not get the specific one that is the answer to your question as this is still a needle in your haystack.

So this explains why our semantic search results often aren’t as good as they could be – at least initially.

Improving the embeddings

So, what can we do about it? How can we improve the quality of our embeddings?

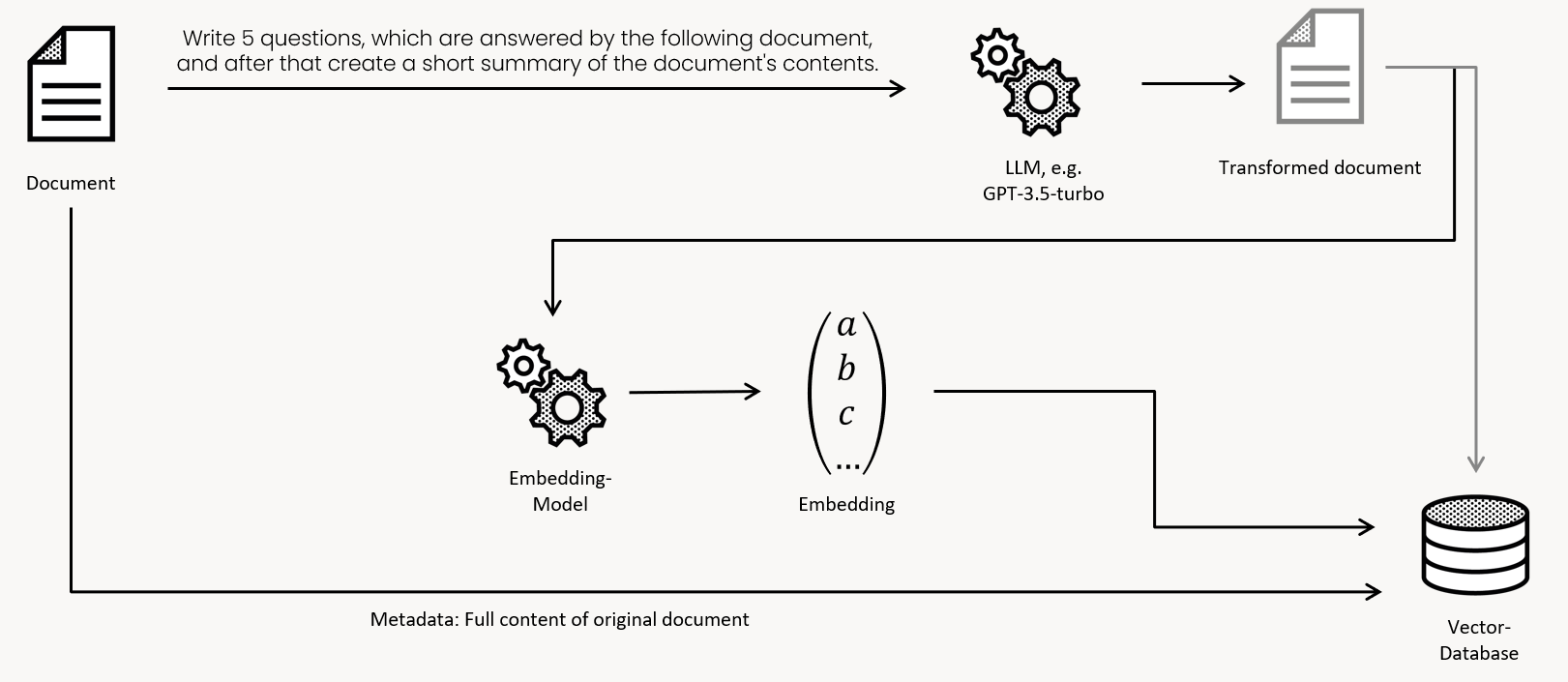

The main idea is to create the embedddings from content that is closer to our questions. And what is closer to a question about a specific topic than a document about this topic? Correct: Another question about this specific topic.

So we need a way to get questions for our documents to create the embeddings for these. Luckily, that is also what language models excel in. GPT stands for Generative Pretrained Transformer, and transforming our content into questions is exactly what we need.

In this example we will use Langchain to load a lot of content in the form of markdown files from a directory (our knowledge base), then we will use a LLM to transform each document into a few questions about the specific contents and then store these transformed documents in a vector database with their embeddings. For this sample the database will be chroma, as it runs locally and stores the data in files.

Creating questions and indexing

First, the code:

# File: index.py

# Prepare the transformation chain

from langchain.chat_models.openai import ChatOpenAI

from langchain.prompts import PromptTemplate

from langchain.chains import LLMChain

transformation_llm = ChatOpenAI(model = "gpt-3.5-turbo-16k", temperature = 0)

transformation_prompt = PromptTemplate(input_variables=["input"], template = "Create six different questions that the following document is going to answer. Each question should be about a different specific topic. After that, create a short summary of the document. This is the document: {input}")

transformation_chain = LLMChain(llm = transformation_llm, prompt = transformation_prompt)

# Function to post-process the content

import copy

def content_postprocess(doc: Document) -> Document:

doc.metadata["original_content"] = copy.copy(doc.page_content)

doc.page_content = transformation_chain.run(doc.page_content)

return doc

# Load the documents

from langchain.document_loaders import DirectoryLoader, UnstructuredMarkdownLoader

contents = DirectoryLoader(

"../knowledgebase",

glob = "**/*.md",

loader_cls = UnstructuredMarkdownLoader).load()

docs = list(map(content_postprocess, contents))

# Prepare the store

from langchain.embeddings import OpenAIEmbeddings

from langchain.vectorstores import Chroma

embeddings = OpenAIEmbeddings(model = "text-embedding-ada-002")

store = Chroma(embedding_function = embeddings, persist_directory = "./db")

# Index the documents

store.add_documents(docs)

store.persist()

Code language: Python (python)The indexing is quite straight forward: First, we set up the chain that will run each document through our model to transform it into something that is more similar to the expected search query.

We then use the standard langchain importers to load our markdown files from the directory and run each document through the transformation chain. The issue here is that this will overwrite the original content, but we will need that after retrieval, so we store it in the metadata of the document.

Then we set up the vector store and add the documents to it. The vector store will create the embeddings for each transformed document and store them in the database.

Retrieval

Now that we have our documents indexed, we can search for them. The code for that is also quite simple:

# Prepare the store

from langchain.embeddings import OpenAIEmbeddings

from langchain.vectorstores import Chroma

embeddings = OpenAIEmbeddings(model = "text-embedding-ada-002")

store = Chroma(embedding_function = embeddings, persist_directory = "./db")

# Function to post-process the content

def retransform_content(doc):

if (doc.metadata.get("original_content") is not None):

doc.page_content = doc.metadata["original_content"]

return doc

from langchain.agents import tool

@tool

def search_tool(query):

"Searches and returns documents from our knowledge base as well as articles from our website. The input is a full question that asks for specific information."

document_count = 3

retriever = store.create().as_retriever()

docs = retriever.get_relevant_documents(query, k = document_count)

docs = list(map(retransform_content, docs))

return docs

tools = [search_tool]

Code language: Python (python)In this specific case the document search is a tool that is passed to a chatbot using the functions agent chain type, so the LLM can decide when to search for documents.

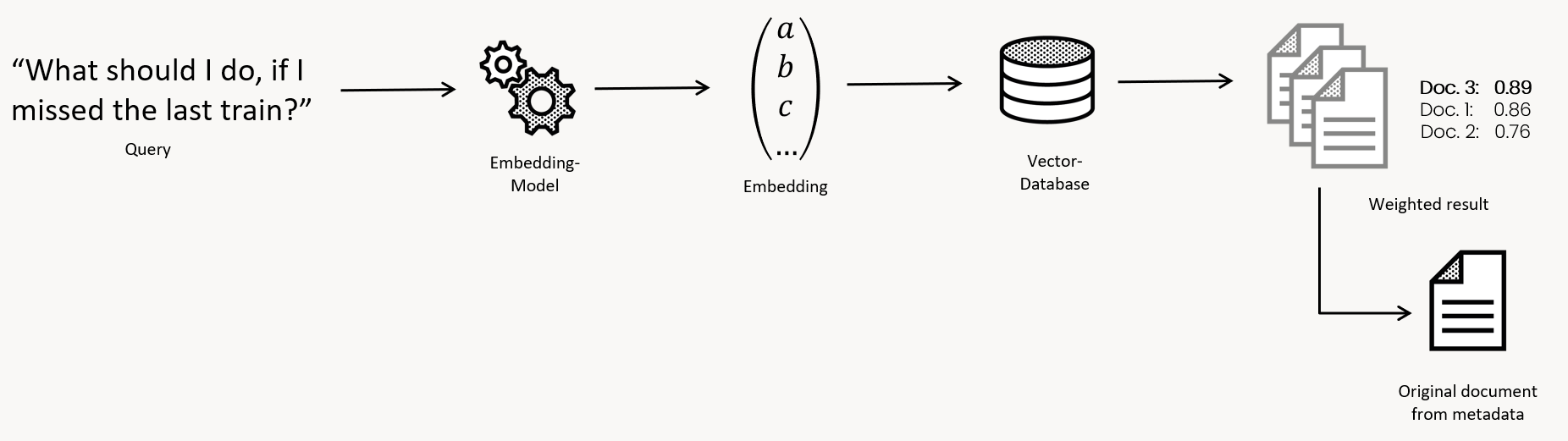

After retrieving the documents, we restore the original content from the metadata and then return the documents.

The tool will return the top 3 documents that are most similar to the query. You can try to increase the amount of documents to search for, but in this case the documents will be passed into another call to the LLM to generate an answer to the query, and with larger documents that might be too huge to fit into the context size of the LLM. This is why we limit the amount of documents to 3.

The results

In our testing, the search results over the transformed documents were much better than the ones over the original documents. The results were a lot more specific and more accurate. We did index our original documents as well as multiple different transformations of them, and had the search also return the relevance score of each document. The results were quite interesting.

On our own internal data we have a document that describes what to do when you did not catch your connection train and were “stranded at a train station”.

The relevance score of the original document was 0.8102 and it was only on second place. Another, wrong, document was on first place with a 0.8113.

The document that got transformed to questions was on first place with a relevance score of 0.8785. That is a huge difference. After that another, much better document was on second place with a relevance score of 0.8195 and the wrong document that landed on first untransformed only came in third with 0.8091.

This is of course only a single example, but we tested with a lot of different queries and documents and the results were always better with the transformed documents, and the difference was always quite significant.

Summary

If your semantic search results are not as good as you hoped for, it might be because the contents of the documents and the questions asked to retrieve them are not as similar as they could be. In this case you might want to try and transform your documents into questions instead, and use these transformed documents to create the embeddings for your search. This will make your search results a lot more accurate and specific.