ChatDocs is the latest representative of AI projects for locally executable Generative Pre-trained Transformers (GPT), which I will refer to as Local-GPT projects in the following. These projects allow to run Large Language Models (LLM) completely privately on one’s own computer (often without the need for special GPUs, but with the possibility to run them on a standard CPU) and to interact with one’s own data, which has been previously indexed in a corresponding vector database. This makes it possible to interactively chat with the contents of one’s own documents in formats such as PDF, HTML, DOC(X), ODT, TXT, MD, EPUB, etc. Thanks to their modular structure, in Local-GPT projects adjustments to workflows or code can be made relatively easily.

The ChatDocs project by Ravindra Marella has only existed for almost 3 weeks but describes itself confidently as follows:

ChatDocs – Chat with your documents offline using AI. No data leaves your system. Internet connection is only required to install the tool and download the AI models. It is based on PrivateGPT but has more features.

Of course, these Local-GPT projects cannot compete in speed or chat performance with commercial variants like those from OpenAI. Queries on an Apple system with a M-processor usually take between 20-70 seconds, which would be too slow for real productive use. Instead, these projects are better understood as an experimental environment where AI-interested users can delve into connections and test the capabilities of different LLMs.

What sets ChatDocs apart from existing well-known Local-GPT projects like privateGPT and localGPT? Two aspects of ChatDocs stand out: it includes an integrated web server and allows the use of an impressive number of diverse LLMs. Let’s take a closer look at these aspects.

ChatDocs Integrated Web Server

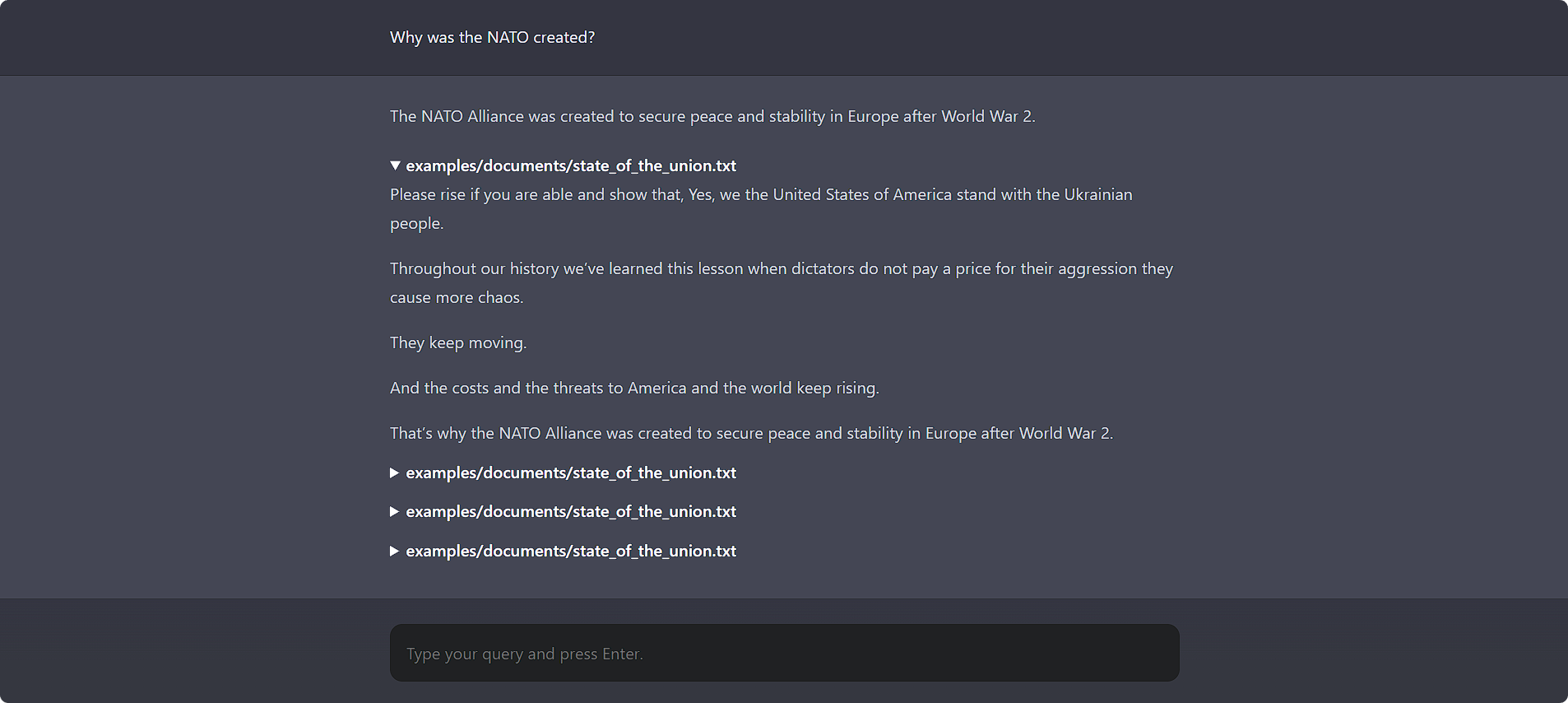

In ChatDocs, a web server is integrated so that not only a simple shell prompt is available, as with other Local-GPT projects.

The interaction only via shell prompt quickly becomes a real productivity killer in privateGPT and localGPT after the first wow moments, because something has already scrolled out of the terminal, or the font has to be set so small that headaches are almost inevitable. In contrast, ChatDocs’ web presentation allows for a more pleasant display of data query results in the browser, with expandable and collapsible source references. This makes the results easier to read.

At the same time, a useful history is created, similar to what users are used to with ChatGPT. Copying the results for further use is much easier.

Extended LLM Support in ChatDocs

For privateGPT and localGPT projects, the selection of usable models/LLMs is currently mostly limited to LLaMA-based models like Alpaca, Vicuna, Guanaco, or Nous-Hermes, and some of the GPT4All-provided models like the GPT-J-based Snoozy or Groovy. Other exciting models like Mosaic’s MPT model, Dolly, Starcoder, or the powerful Falcon model are either not supported or can’t be loaded because of their current model format.

This is partly because the llama.cpp library used in established Local-GPT projects for model inference does not (yet) support every LLM variant. In addition, privateGPT and localGPT have not always followed up on the llama.cpp and associated Python bindings, llama-cpp-python, in their projects in recent weeks.

ChatDocs solves the problem very elegantly and includes its own library called CTransformers for the Python bindings of the models on top of the ggml-library.

| Models | Model Type |

|---|---|

| GPT-2 | gpt2 |

| GPT-J, GPT4All-J | gptj |

| GPT-NeoX, StableLM | gpt_neox |

| LLaMA | llama |

| MPT | mpt |

| Dolly V2 | dolly-v2 |

| StarCoder, StarChat | starcoder |

| Falcon (Experimental) | falcon |

CTransformers replaces the widely-used llama.cpp, which is also used by privateGPT and localGPT and is up to date in terms of model file formats. These features are provided:

- Locally stored models of different types can be loaded from disk.

- If the Huggingface name of a model is specified, CTransformers automatically downloads the model from Huggingface during the initial start.

- GPU support is offered for LLaMA models, significantly increasing performance.

- Support for the GPTQ format, if the additional auto-gptq package is installed in ChatDocs.

ChatDocs configuration

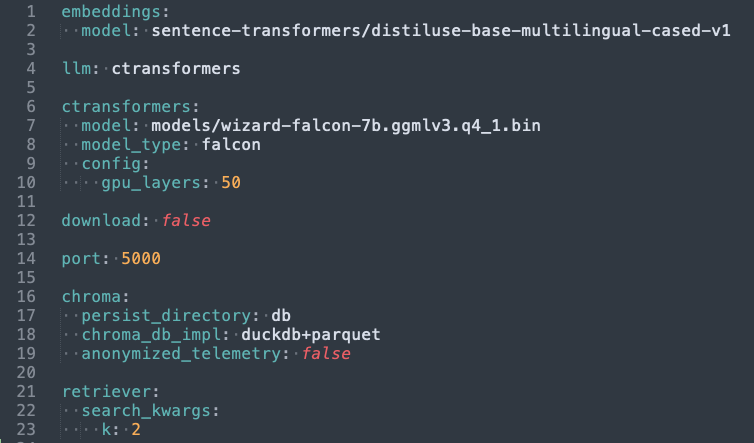

The configuration of ChatDocs is conducted through a YAML file, which provides significantly more structure and potential than the env files employed by PrivateGPT and LocalGPT. The screenshot displays my current configuration for a multilingual sentence transformer and Falcon-7B LLM in GGML format.

Conclusion

ChatDocs has uniquely positioned itself within the Local-GPT project community in an impressive short amount of time. It has taken an innovative approach, with an integrated web server and support for an impressive number of diverse Large Language Models via CTransformers, thus making it a compelling alternative to existing projects like privateGPT and localGPT.

The ability to interact more intuitively with one’s own data, without the constraints typically associated with shell prompts, combined with greater flexibility in model selection, makes ChatDocs a preferred choice for AI enthusiasts who wish to explore and test different LLMs.

While acknowledging the limitations compared to commercial alternatives in terms of speed or chat performance, ChatDocs is nonetheless pioneering in offering users the opportunity for a more personalized experience as they delve into understanding and utilizing AI capabilities.

In conclusion, ChatDocs proves that even smaller-scale projects can lead innovation by identifying opportunities in existing platforms and improving upon them. It’s an excellent reminder that versatility and adaptability can often outweigh sheer size when it comes to advancement. Therefore, we can look forward to more such unique offerings from this project as well as similar ones across the broader AI landscape.

In an upcoming article, I will demonstrate how I utilize the versatility of the LangChain framework to incorporate the enhanced LLM support of ChatDocs. This integration is achieved using CTransformers in privateGPT with just a few modifications in the privateGPT codebase. Subscribe to our Thinktecture Labs newsletter to stay updated on new articles.